Elan Barenholtz, Ph.D. — Associate Professor, Florida Atlantic University · MPCR Lab · YouTube

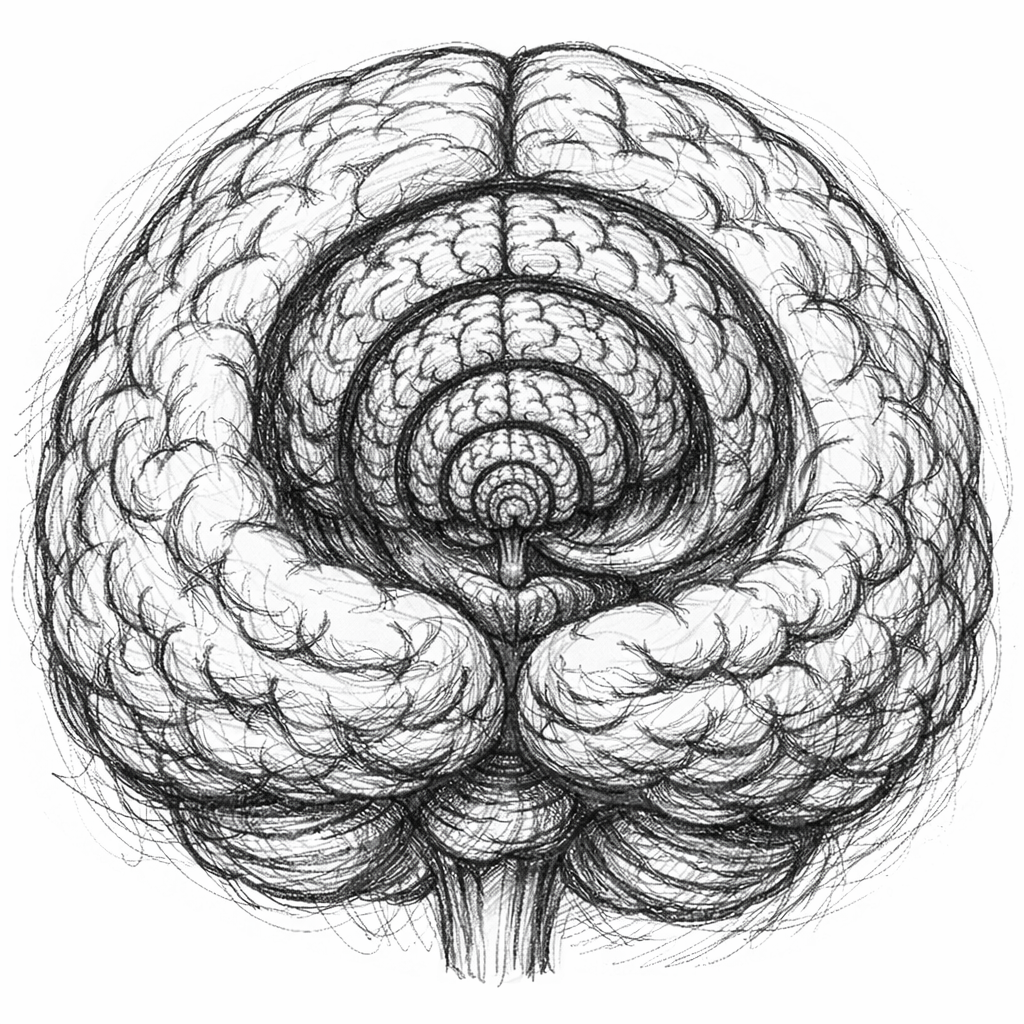

The Autoregressive Brain

A unified theory of cognition

In this framework, perception, memory, language, reasoning, and action are not separate faculties. They are aspects of the same autoregressive process: the brain generating each next state as the optimal continuation of the preceding trajectory. What we call memory is the continued influence of past generation on present generation. What we call attention is the convergence of multiple modalities into a single next state. What we call behavior is generated content that happens to move muscles.

Large language models are not merely analogies — they are running the linguistic case of the same architecture. Autoregressive generation over learned distributional structure produces composition, context-sensitivity, and apparent understanding without any of the machinery cognitive science has traditionally assumed is required.

The Autoregressive Brain

A new framework for cognition, memory, and language

Generation, Not Retrieval

Remembering is reconstructive generation, not playback from storage. Memory is the capacity to regenerate experiential trajectories, constrained by learned structure.

Syntax as Distributional Epiphenomenon

What linguists call "grammar" is not a rule system but the skeletal structure of distributional statistics. Function words are high-leverage tokens that constrain sequential prediction.

Consciousness as Recursive Autoregression

Subjective experience emerges from the cognitive system modeling its own generative process. The "self" is a trajectory through state-space, not an entity.

LLMs as Existence Proofs

Large language models demonstrate that composition, apparent reasoning, and memory-like behavior can emerge from autoregressive prediction without explicit storage or rule systems.

Latest Writing

From the blog

-

April 15, 2026

Comprehensive List: 74 Phenomena Explained by Autoregressive Theory

The autoregressive brain framework proposes a single computational principle underlying cognition: each mental state is generated as the optimal continuation of the preceding trajectory. Below is the full compilation of phenomena this principle explains.

-

April 13, 2026

Syntax Without Rules: The Distributional Skeleton of Language

The most consequential finding in modern linguistics did not come from a linguistics department. It came from scaling next-token prediction: large language models acquire grammatical competence — subject-verb agreement across long dependencies, recursive embedding, tense consistency, productive morphology, pragmatic sensitivity...

-

April 13, 2026

Memory as Trajectory Regeneration

The storage-retrieval model of memory has been the default framework in cognitive science since Ebbinghaus. Experiences are encoded, consolidated into stable representations, and later retrieved. The metaphor shifts with each generation — wax tablets, filing cabinets, hard drives — but...

Videos

Conversations and presentations on the autoregressive mind

The (Disturbing) Theory of Autoregressive Minds

Theories of Everything with Curt Jaimungal

Discussion with Michael Levin

Thoughtforms Life